To use ChatGPT, you need to have ChatGPT tokens, which are the units of text that the model processes and analyzes.

In this article, we will explain what ChatGPT tokens are, how they are consumed in different models and languages, how to monitor and refill your token balance, and how to optimize your token usage. We will also provide some examples of how ChatGPT can assist you in your translation projects.

What Is a ChatGPT Token?

A ChatGPT token is a text fragment that the model uses to understand and generate natural language. Tokens can be single characters, words, or parts of words, depending on the tokenizer used and the language. For example, in English, the word “translation” can be split into two tokens: “trans” and “lation”. In Chinese, each character is a token.

The number of tokens in a text depends on the length and complexity of the text, as well as the language. For English text, one token is approximately 4 characters or 0.75 words . For Chinese text, one token is one character.

How Are Tokens Consumed in Different Models?

ChatGPT offers multiple models for different purposes and budgets. Each model has a different price per 1,000 tokens. You can think of tokens as pieces of words, where 1,000 tokens is about 750 words for English text. This paragraph is 35 tokens.

The models are divided into two categories: chat models and completion models. Chat models are optimized for dialogue and can generate natural language responses based on your input. Completion models are optimized for following single-turn instructions and can generate various types of text such as summaries, paraphrases, translations, code, etc.

The chat models include gpt-3.5-turbo and InstructGPT. The completion models include Ada, Babbage, Curie, Davinci, GPT-4, and fine-tuned models. You can find more details about each model’s capabilities and price points on the OpenAI website.

The token consumption for each model depends on two factors: the prompt and the completion. The prompt is the input text that you provide to the model to generate a response. The completion is the output text that the model produces based on your prompt.

For chat models, the prompt is usually a short question or statement that initiates or continues a conversation with the model. The completion is usually a short answer or reply that follows the conversation flow. For example:

Prompt: Hi ChatGPT! How are you today?

Completion: I’m doing great! Thanks for asking.

The prompt is 8 tokens and the completion is 7 tokens. The total token consumption for this request is 15 tokens.

For completion models, the prompt is usually an instruction that specifies what type of text you want the model to generate. The completion is usually a longer text that fulfills your instruction. For example:

Prompt: Summarize this article in three sentences.

Article: Lorem ipsum dolor sit amet…

Completion: This article is about lorem ipsum dolor sit amet… (three sentences)

The prompt is 7 tokens and the article is 200 tokens. The completion is 15 tokens. The total token consumption for this request is 222 tokens.

As you can see, the token consumption for completion models is usually higher than for chat models because the prompts and completions are longer and more complex.

How are they calculated?

Tokens can be single characters, words, or parts of words, depending on the tokenizer used and the language. For example, the word “hello” is one token in English, but two tokens in Japanese (“ハロー”).

ChatGPT converts each word into a legible token whenever you ask a question or request a completion. To break it down further, tokens are text fragments, and each programming language uses a different set of token values to understand the requirements.

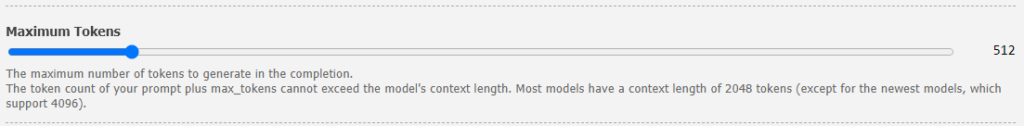

The number of tokens you use depends on the length and complexity of your input and output texts. For English text, 1 token is approximately 4 characters or 0.75 words. In ChatGPT, the maximum length of text that can be generated is determined by the model’s maximum context length, which is typically 2048 tokens, or approximately 1500 words for English text.

The number of tokens you have available depends on the pricing plan you choose. ChatGPT offers multiple models, each with different capabilities and price points. Prices are per 1,000 tokens. You can think of tokens as pieces of words, where 1,000 tokens is about 750 words. For example, the gpt-3.5-turbo model costs $0.002 per 1,000 tokens.

How do different languages consume different amounts of tokens?

Different languages have different characteristics that affect how many tokens they consume. For example, some languages have longer words than others, some languages use more punctuation marks than others, and some languages have more complex grammar than others.

Generally speaking, languages that use alphabets tend to consume fewer tokens than languages that use logograms (symbols that represent words or concepts). For example, English uses an alphabet of 26 letters, while Chinese uses thousands of logograms. Therefore, a sentence in Chinese will usually consume more tokens than a sentence in English.

However, this is not always the case. Some languages that use alphabets have longer words than others. For example, German often combines multiple words into one compound word, such as “Rindfleischetikettierungsüberwachungsaufgabenübertragungsgesetz”, which means “law for the delegation of monitoring beef labeling”. This word alone consumes 63 tokens in ChatGPT.

Another factor that affects token consumption is punctuation. Some languages use more punctuation marks than others to indicate sentence structure, tone, or emphasis. For example, Spanish uses inverted question marks (¿) and exclamation marks (¡) at the beginning and end of questions and exclamations. These marks add to the token count.

A third factor that affects token consumption is grammar. Some languages have more complex grammar than others, requiring more words or modifiers to express the same meaning. For example, Arabic has a rich system of verb conjugations and noun cases that change depending on gender, number, person, tense, mood, and voice. These variations increase the token count.

To illustrate how different languages consume different amounts of tokens, let’s look at an example sentence in five languages: English, Spanish, German, Japanese, and Chinese.

- English: “How are you today?” (5 tokens)

- Spanish: “¿Cómo estás hoy?” (6 tokens)

- German: “Wie geht es dir heute?” (7 tokens)

- Japanese: “今日は元気ですか?” (9 tokens)

- Chinese: “你今天好吗?” (10 tokens)

As you can see, the same sentence consumes different amounts of tokens depending on the language. This means that if you use ChatGPT for translation tasks involving multiplelanguages, it’s important to keep in mind that token consumption can vary widely.

Another important factor to consider when using ChatGPT for translation tasks is the quality of the training data. ChatGPT is trained on a vast amount of text data from the internet, which means it has been exposed to a wide range of languages, dialects, and writing styles. However, the quality of this data can vary, which can affect the accuracy of ChatGPT’s translations.

For example, if ChatGPT encounters a sentence that uses uncommon or obscure vocabulary, it may struggle to accurately translate the sentence because it has not seen that particular vocabulary in its training data. Similarly, if ChatGPT encounters a sentence with poor grammar or syntax, it may produce a less accurate translation because it has not been trained on data with similar grammatical errors.

To mitigate these issues, it’s important to provide ChatGPT with high-quality training data that accurately reflects the languages and writing styles you want it to translate. This can involve curating specific datasets or using pre-existing datasets that have been vetted for quality.

What Happens When You Run Out of ChatGPT Tokens?

When you run out of ChatGPT Tokens, you will not be able to use the app until you refill them or wait for your quota to reset. You can refill your tokens by purchasing more credits or subscribing to a plan that suits your needs. You can find the price tables for different models and plans on the OpenAI website. Alternatively, you can wait for your quota to reset at the beginning of each month or billing cycle.

Additionally, when you sign up for ChatGPT, you will be granted an initial spend limit, or quota, and it will increase over time as you build a track record with your application. If you need more tokens, you can always request a quota increase.

How to Save ChatGPT Tokens and Get the Most Out of ChatGPT?

Here are some tips on how to save ChatGPT Tokens and get the most out of ChatGPT:

- Choose the right model for the job. Different models have different capabilities and price points. You can compare the features and performance of different models on the OpenAI website. For example, if you only need to follow single-turn instructions, you can use Instruct models that are cheaper than GPT-4 models.

- Use shorter prompts and completions. The longer your prompts and completions are, the more tokens you will use. Try to be concise and specific in your instructions and requests. You can also use parameters like max_tokens or stop to limit the length of your completions.

- Use caching and deduplication. If you have repeated or similar requests, you can use caching and deduplication techniques to avoid generating new tokens every time. You can store your previous responses in a database or a file and retrieve them when needed. You can also use hashes or fingerprints to identify duplicate requests and avoid generating them again.

- Use feedback and evaluation. If you want to improve the quality and accuracy of your responses, you can use feedback and evaluation tools to provide ratings or comments to ChatGPT. You can also contribute evals to help OpenAI improve the model for different use cases.